Operator PPC Measurement Due Diligence for iGaming

When an iGaming operator evaluates PPCJuice and/or PPC agency partners, “measurement” is usually the point where vendor promises meet platform constraints, consent requirements, and internal finance realities. This due diligence guide is designed for operators who need operator-grade answers: what will be tracked, how it will be validated, where it can break, and what governance prevents drift after launch.

Compliance note: advertising policy and jurisdiction rules vary by market, platform, and license. This article is not legal advice. Your compliance and legal teams should validate the final approach.

Table of Contents

- 1) Define measurement scope and success definitions

- 2) Map the end-to-end data flow

- 3) Attribution model and conversion hierarchy

- 4) Consent, privacy, and jurisdiction constraints

- 5) Tracking QA and ongoing monitoring

- 6) Reporting standards and reconciliation

- 7) Vendor decision criteria (table)

- 8) Operator checklist

1) Define measurement scope and success definitions

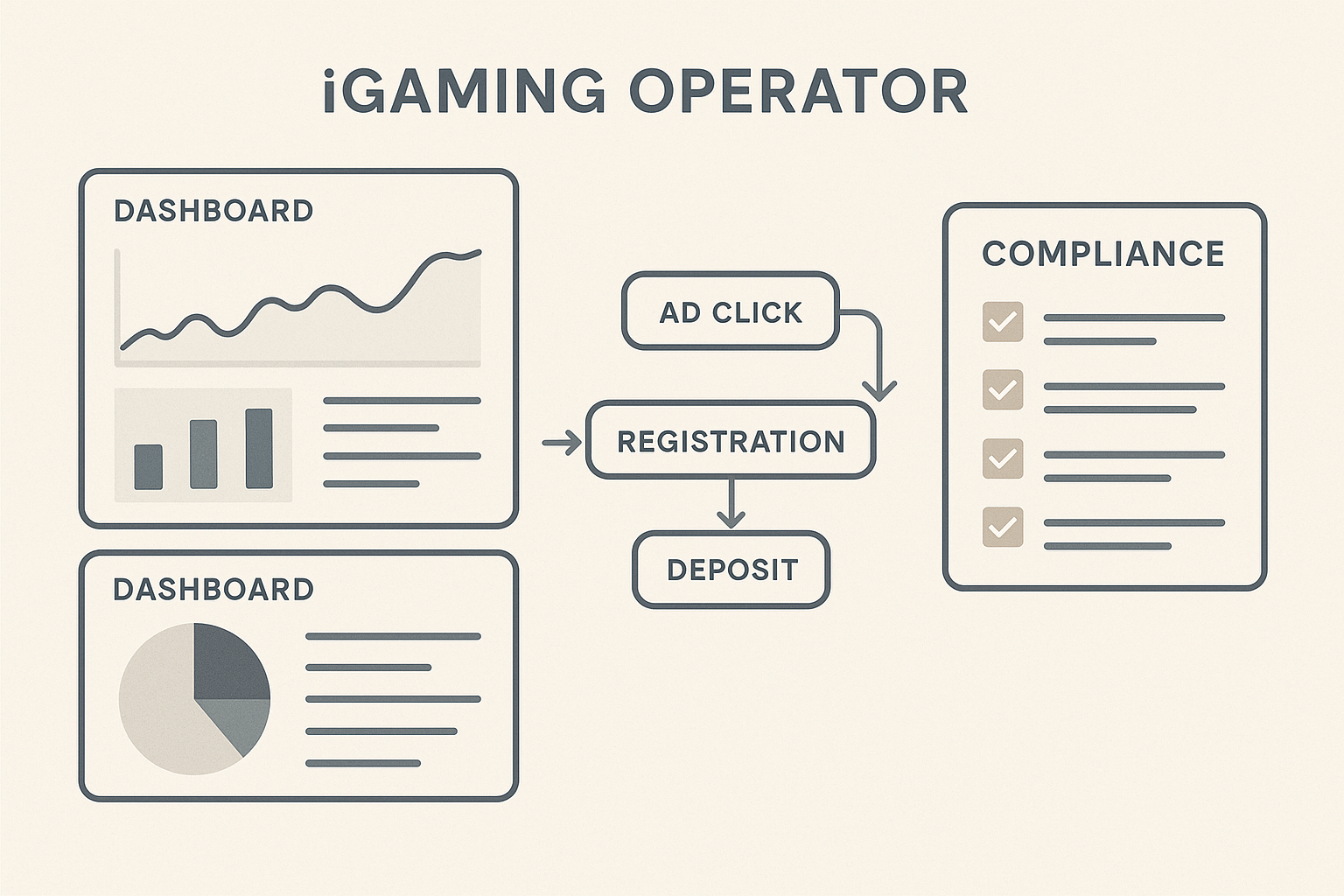

Start by writing down what you will optimize in-platform versus what you will judge as “business success” outside ad platforms. In iGaming, a single “conversion” definition is rarely enough because acquisition quality is sensitive to bonus abuse, KYC pass rates, and deposit behavior.

- Platform conversions: landing page view, registration start, registration complete (where allowed), first deposit, deposit amount, or a proxy event when stricter policies apply.

- Operator KPIs: verified registrations (post-KYC), first-time depositor (FTD), net gaming revenue, chargeback/fraud flags, and retention windows.

- Time horizon: define when you call a user “successful” (e.g., D7 or D30 net deposit) and how that affects bid strategy and reporting cadence.

Due diligence question for any partner: which events can realistically be used for optimization under your target geos and platform policies, and what is the fallback plan when “deposit” cannot be directly passed back?

2) Map the end-to-end data flow

Measurement due diligence is easiest when you draw a simple map from click to finance. Ask vendors to document:

- Identifier capture: how gclid/wbraid/gbraid and UTM parameters are stored, and how long they persist across sessions and devices.

- Event generation: where events fire (tag manager, server, app SDK) and how you prevent duplicates.

- Transport: pixel vs server-to-server vs API (e.g., offline conversions). Confirm retry logic and error handling.

- Storage: where raw events live (data warehouse or logs), who owns access, and retention policies.

- Reconciliation: how ad spend, click data, and player ledger data are matched for finance-ready reporting.

Operator tip: if a partner cannot explain the data flow in one page, measurement issues will surface later as “mysterious” attribution swings.

3) Attribution model and conversion hierarchy

In iGaming, you typically need a two-layer approach: platform attribution for bidding, and operator attribution for decision-making. During due diligence, align on a conversion hierarchy:

- Primary optimization event: the highest-quality event you can reliably pass with consent and policy alignment (often registration complete or deposit proxy; sometimes FTD via offline conversion upload).

- Secondary events: steps that diagnose funnel breakage (landing view, reg start, KYC initiated, KYC passed).

- Quality flags: postbacks that identify reversals, self-exclusions, or fraud markers, used for exclusion lists and performance interpretation.

Ask specifically whether the partner will use last-click, data-driven attribution (where available), or a blended model—and how they will keep reporting consistent when platforms reattribute over time.

4) Consent, privacy, and jurisdiction constraints

iGaming PPC measurement must respect consent status and local rules on data use. Your due diligence should verify:

- Consent mode behavior: what fires when users deny analytics/ads consent; what modeled conversions you will accept, and how they are labeled in reporting.

- Data minimization: no unnecessary PII in URLs, tags, or conversion payloads; hashing approaches if identifiers are used.

- Geo-specific controls: separate tag configurations by jurisdiction where required, including age gating and responsible gambling messaging constraints.

- Vendor access boundaries: who can view player-level data versus aggregated metrics, and how access is audited.

Define in advance what happens when policy changes force you to reduce signal (e.g., moving from client-side pixels to server-side events with stricter filtering).

5) Tracking QA and ongoing monitoring

Due diligence is not just “is tracking installed?” It is “can we detect when it breaks?” Require a QA plan that includes:

- Pre-launch test scripts: test accounts, test deposits where permissible, and a shared checklist of expected parameters and event counts.

- Duplicate and missing event detection: rules for identifying spikes from double firing or drop-offs from tag suppression.

- Change control: how site releases, payment provider changes, or CMP updates are communicated and validated.

- Alerting: thresholds for conversion rate, event volume, or spend-without-conversions anomalies.

Ask who owns QA: the operator, the agency, PPCJuice, or a shared RACI. Measurement fails most often at handoffs.

6) Reporting standards and reconciliation

Operators often receive three numbers for “FTDs” (platform, affiliate, and internal BI). Due diligence should force a single source of truth per purpose:

- For bidding: platform-reported conversions aligned to the configured primary event.

- For budget allocation: operator BI numbers with defined cutoffs (e.g., KYC-approved FTDs only).

- For finance: spend from invoices/ad accounts matched to approved attribution windows.

Require definitions in writing: attribution window, currency conversion, VAT handling, chargeback treatment, and how player reversals are reflected in historical reports.

7) Vendor decision criteria (table)

| Area | What to ask | Good sign | Risk sign |

|---|---|---|---|

| Conversion design | Which events are primary/secondary, and why? | Clear hierarchy tied to policy and funnel reality | Single “signup” KPI with no quality layer |

| Offline conversions | Can you upload FTD/KYC-approved outcomes? | Documented process, IDs stored safely, retry logic | “We’ll figure it out later” or manual spreadsheets |

| Consent/CMP | How do tags behave by consent state? | Consent-aware firing and modeled vs observed labels | Unclear consent handling or mixed configurations |

| QA & monitoring | How do you detect tracking breaks within 24 hours? | Alerts + change control + routine audits | Relies on monthly reporting to spot issues |

| Governance | Who owns access, naming, and documentation? | RACI, access logs, naming conventions | Shared logins, no documentation, ad hoc changes |

8) Operator checklist

- Document conversion definitions: platform event(s) vs operator KPI(s), with attribution windows.

- Confirm identifier capture for clicks (gclid/wbraid/gbraid) and UTM persistence across domains and app/web.

- Validate consent mode/CMP behavior by jurisdiction; ensure reporting separates observed vs modeled conversions where applicable.

- Require an offline conversion plan for FTD and KYC-approved milestones (IDs, hashing, storage, retry, audit trail).

- Establish QA scripts and ownership (RACI) for pre-launch and after every site/CMP/payment change.

- Set monitoring alerts for missing events, duplicates, and spend-without-conversions anomalies.

- Standardize naming conventions for campaigns, ad groups, and conversion actions to support clean reporting.

- Agree on reconciliation: ad platform data vs internal BI vs finance, including reversals and chargebacks.

- Define access and data boundaries for partners; avoid shared credentials and document permissions.

- Schedule a quarterly measurement review to re-check policy changes and tracking drift.

If you want a practical next step, ask PPCJuice or any agency partner to deliver a one-page measurement map, a conversion hierarchy, and a QA/monitoring plan before you commit budget. The goal is not “perfect attribution,” but predictable, auditable measurement that survives policy changes and site releases.